The process of hazard analysis and risk assessment (HARA) is a central building block in many safety-assurance workflows. The need for HARA arises generally in critical environments, in which harm to users can be caused, for example, by handling heavy machinery. Another relevant use case is a collaborative setting of humans and robots sharing a workspace in industrial automation. A rigorous hazard analysis is indispensable to protect human lives and to comply with regulations. In practice, however, producing a sufficiently comprehensive list of hazard events often requires expert workshops and multiple review cycles, which can become a bottleneck as systems and contexts grow more complex.

Large language models (LLMs) seem like a natural fit for this challenge: Hazard identification resembles brainstorming variations of dangerous scenarios, and LLMs can generate such candidate scenarios quickly. But free-form prompting is a poor fit for safety engineering. The output is often inconsistent in structure, hard to trace back to inputs, and difficult to review systematically – exactly what you want to avoid when results may influence safety decisions.

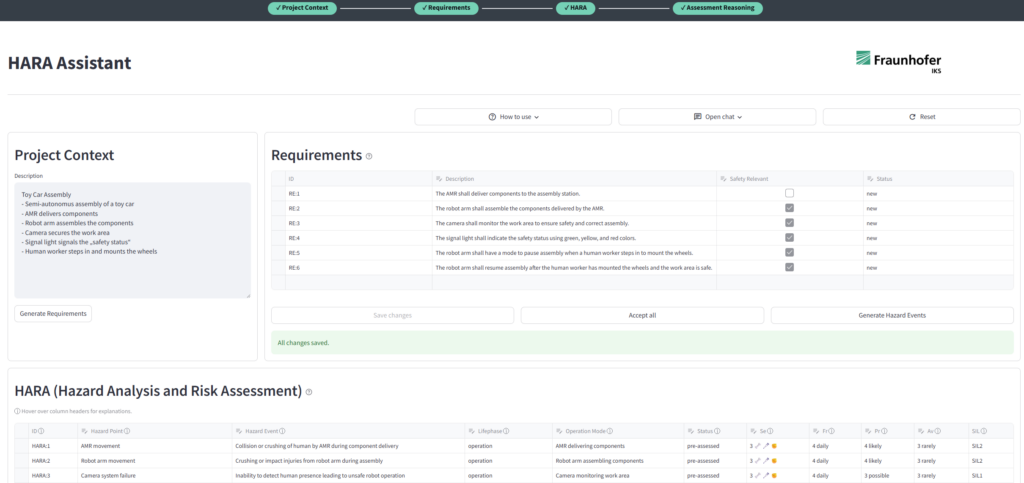

The safer approach is to constrain the interaction to a workflow that remains engineer-led and easy to review. This is why we develop the HARA Assistant (AS-PHALT) at Fraunhofer IKS.

Instead of asking for a final hazard list in one shot, the workflow introduces explicit checkpoints and structured outputs.

- Phase 1: Turn system context into a shared, checkable system description.

A safety engineer provides a short system context description. The tool derives an initial set of system-level requirements describing what the system should do. Crucially, the engineer reviews and adjusts this intermediate artifact before moving on. This reduces ambiguity and ensures the next step starts with a description the engineer endorses. - Phase 2: Generate hazard candidates from reviewed inputs in a structured form.

Hazard candidates are generated from the reviewed description and requirements and guided by standard-oriented hazard categories. The results are kept structured, so engineers can quickly scan and remove duplicates, flag irrelevant items, and iteratively refine the list. - Phase 3: Pre-assess the severity of consequences, frequency of exposure, probability of occurrence, and avoidability of harm for the found and reviewed hazards. Using these values, Safety Integrity Levels (SILs) are calculated to determine countermeasures and safeguards during the development process.

It is important to understand why structure matters in this use case (more than clever prompting):

- Reviewability improves: Structured outputs are faster to validate than long narrative responses, and easier to compare across iterations.

- Consistency improves: Fixed phases and standardized output formats reduce variability and “format drift.”

- Human responsibility stays clear: Explicit review points make it obvious where the engineer must confirm, correct, or extend the result.

LLMs add value to hazard identification, but only when embedded into structured, reviewable workflows that narrow the task, ground the output, and keep safety engineers in the loop.

If you are interested in trying our HARA Assistant yourself, you can check out the Fraunhofer IKS playground on our web page: https://playground.iks.fraunhofer.de/as-phalt/.

The playground demonstrates the structured, reviewable workflow described above and allows you to explore how LLM-supported hazard identification can be embedded into engineer-led HARA processes.

Don’t hesitate to contact us for questions, feedback, or collaboration opportunities.

Author: Florian Butsch, Fraunhofer IKS

Header image copyright © Antony Weerut– stock.adobe.com