In traditional data science (DS), a human expert, the data scientist, writes scripts for entire pipelines, including standard steps like data preprocessing, feature engineering, hyperparameter tuning, and finally computing metrics and visualizing insights. This work is tedious and repetitive and can be significantly optimized with modern LLMs. For example, many users are exploring options like ChatGPT Advanced Data Analysis, where one simply uploads data and articulates entire problems as prompts.

However, such approaches have several limitations:

- concrete instructions for real DS projects are more complex than can be solved via one-shot prompting

- capabilities of generative models are still limited with respect to arbitrary mathematical computations

- data files are often very large and cannot simply be uploaded as attachments

- there are often privacy concerns and users may feel insecure uploading their enterprise data to cloud-based LLMs

- DS solutions are typically multi-step: as a solution workflow progresses, simply packing all instructions, text, code, data, and results into the running context makes it unintelligible for most LLMs, and often exceeds context length limits

Agentic systems now power many real-world information retrieval (IR) and machine learning (ML) applications, where LLMs perform various roles in complex task pipelines. DS is a unique instance of such complexity, tightly coupling IR, ML, and traditional statistics. Agentic systems with effective context management can alleviate most of the above problems for DS tasks. This has become an active research area, where the best systems usually achieve impressive results via sophisticated prompt chaining. But we found that it is very difficult for a typical end-user to get a clear idea of how the task is actually being solved.

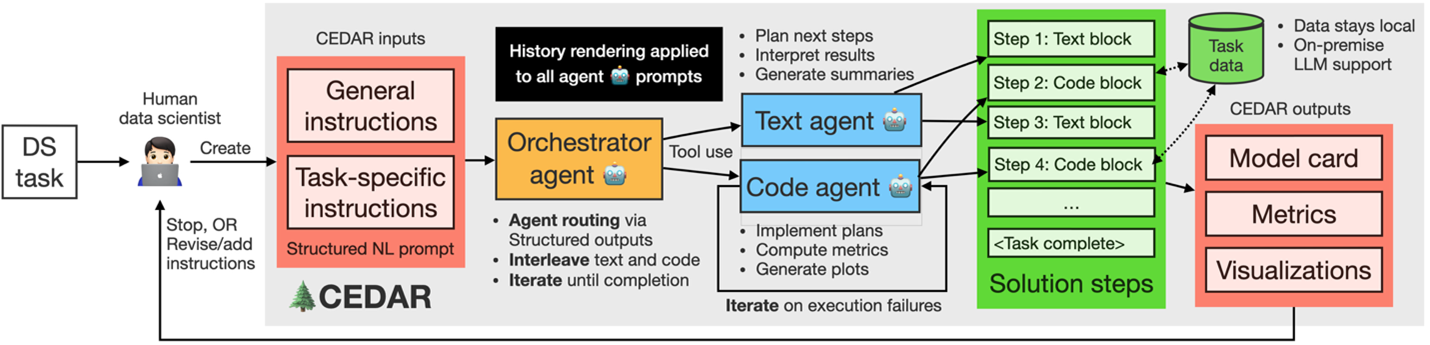

At Fraunhofer IIS’ NLP team, we demonstrate CEDAR, whose purpose is to bring transparency and simplicity to DS solutions using LLMs. CEDAR relies on effective context engineering, collectively referring to strategies for maintaining an optimal set of tokens during LLM inference, including all information that may land there outside of prompts. An overview of our workflow is shown in the figure below. The data scientist formulates the task as a structured prompt, that is passed on to an orchestrator agent. This agent routes text and code generation requests to sub-agents as tools, to generate a readable solution with short steps. Finally, the human inspects outputs and revises instructions for further iterations, if necessary.

The app can be used by beginner to expert-level IR, ML, NLP, and AI practitioners. IR and ML basics help, but there is no prerequisite of core DS knowledge to interpret the workflow: each solution step has natural language (NL) explanations. Beginners can get a feel of how basic data science tasks are solved. Intermediate users can assess the faithfulness of the generated solution relative to the original intent and gain insights into how agentic systems can be built to simplify DS. Experts can scrutinize LLM-generated code snippets, and whether the generated solution mimics code written by a human data scientist. For more information on CEDAR, please visit https://arxiv.org/abs/2601.06606

Authors: Rishiraj Saha Roy, Chris Hinze, Luzian Hahn, and Fabian Küch from Fraunhofer IIS